Technical operations teams are often the first and last line of defense when it comes to delivering flawless streaming experiences. So, it’s important to pinpoint the metrics that matter most when monitoring performance and diagnosing issues.

Here are the top five metrics tech ops teams need to know:

Streaming Performance Index (SPI)

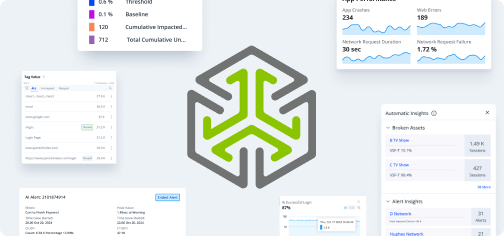

Conviva’s proprietary SPI score looks at benchmark thresholds and grades the overall quality of every stream based on video start failure (VSF), exits before video start (EBVS), rebuffering ratio, video playback failures (VPF), video startup time (VST), and picture quality. Exclusive to Conviva, SPI is a great metric for tech ops because it can be broken down by different dimensions, such as device and country, to better understand the experience across audience segments and endpoints. In addition to providing a score, benchmarks contextualize the score so that you can quantify how your performance ranks compared to your industry peers.

Rebuffering ratio

Rebuffering is when the video stalls during playback and the viewer must wait for the video to resume playing. Frequent rebuffering is a major source of poor quality of experience and often leads to audience abandoning the content. A consistently high rebuffering ratio or a significant increase in this metric can be an indication of declining overall network performance and the likelihood of viewer churn. It is a quick representation of the overall quality of a viewer’s experience.

Conviva measures rebuffing ratio on all dimensions, including for both content and ads, so you can understand which piece of your delivery puzzle is impacting the greatest number of viewers. This metric can also be used to diagnose and troubleshoot parts of the delivery network as the root cause of high rebuffering.

Video playback failures (VPF)

Video playback failure occurs when video play terminates due to a playback error, such as video file corruption, insufficient streaming resources, or a sudden interruption in the video stream. VPFs are an important measurement of service quality and audience engagement, especially when a large percentage of plays terminate due to VPF. It represents the worst possible experience a viewer can have because it interrupts the stream completely so they can’t watch. Because of this, it’s an extremely important metric to monitor and troubleshoot.

Attempts

Attempts counts all endeavors to play a video which are initiated when a viewer clicks play or a video auto-plays. Paired with other metrics, attempts can show you a wide variety of issues; how many attempts were made versus successful plays, failed plays, and everything in-between.

It’s important to know attempts for two other reasons. First, you want to know how many plays or auto-plays your content received, and you also want to know the percentage that were successful. By comparing attempts with successful video starts, you can quickly identify specific content or ads with encoding problems, player problems, or ad errors to diagnose the root cause resulting in a drop off in engagement at the beginning of a viewer session.

Concurrent plays

Concurrent plays is the maximum number of simultaneously active sessions during a given interval. Monitoring concurrent plays is important to help you define your audience, determine video quality, and track which customers are actively engaged with a video. Concurrent plays is very helpful when planning network capacity for large-scale live events such as sporting events, premieres, etc. For tech ops, it’s particularly important to see whether peak concurrency played a factor in a quality of experience issue or even caused the issue.

As the team responsible for ensuring a flawless experience for everyone, on every screen, every time, tech ops teams have a lot of weight on their shoulders. With access to the right metrics and a full understanding of network performance and viewer experience, the job of tech ops will be much easier, more efficient, and more effective.