In the early days of streaming, industry players were hyper-focused on growing their subscriber bases. Now, in what many experts are deeming a “make or break” year for streaming, companies are shifting their focus to profitability.

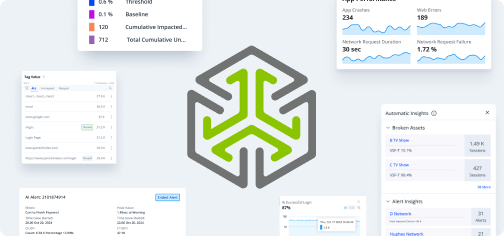

Quality of experience is becoming the main deciding factor in the current competitive landscape. Improving it by using real-time stream analytics can help you retain customers, boost engagement, and even reduce operational costs.

Key Takeaways:

- You need to balance programming priorities against QoE optimization as you reduce content spending in 2023.

- Adding subscribers is good, but retaining and monetizing is the low-hanging fruit.

- You’ll want to explore ways to generate revenue without driving up costs.

- Differentiation is key in this increasingly crowded market.

- A real-time streaming analytics system gives you the insights you need to compete.

1. Cut Costs Without Sacrificing Quality

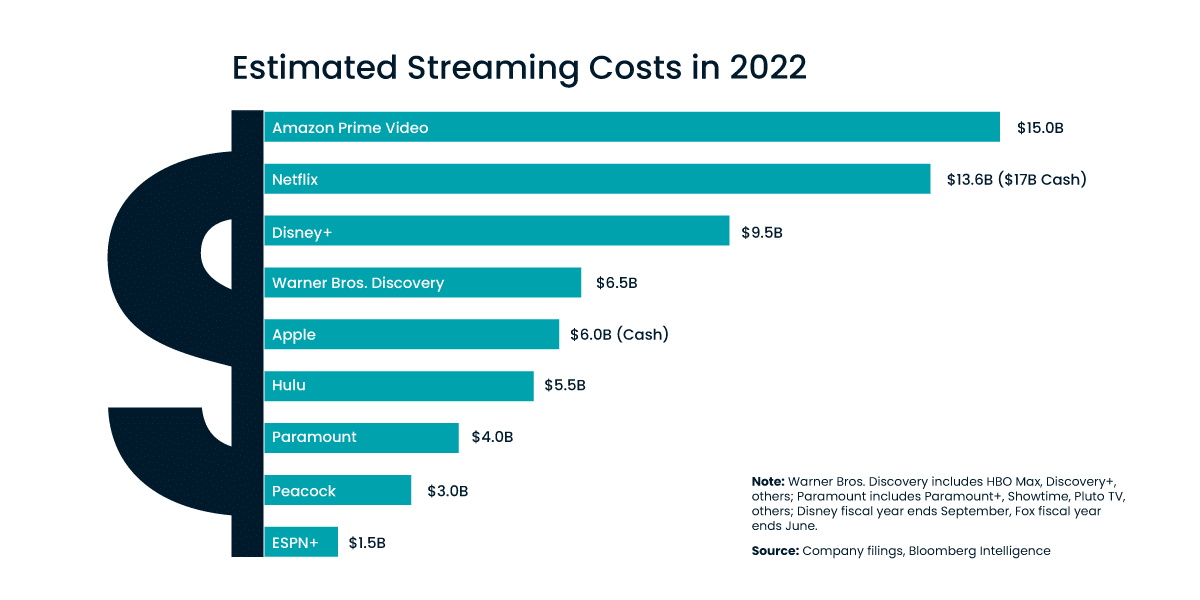

For years, streaming services have thrown money at content. This strategy made sense when the streaming industry was an emerging business and its major players were building their catalogs to attract subscribers.

Today, however, the soaring cost of content development is less sustainable. Most big platforms have refocused on increasing profit margins.

Committing resources to programming is a media-publishing basic. However, what is available to watch is far from the only differentiator in today’s market.

Audiences want a smooth, responsive interface and a low-error video experience. The ops side matters. Real-time streaming data analytics unlock the true power of your operations, enabling progress toward various key business objectives:

- Lowering the cost of ownership of your multi-CDN strategy

- Reduce customer service costs by laser-targeting the events that most often result in tickets or complaints

- Lower subscriber churn rates by providing the same (or better) QoE than your competitors

These types of actions require detailed insight. In some instances, such as in the event of network-related buffering issues, you need to know about and react to the situation as it develops. An up-to-the-minute, integrated stream analytics and issue-resolution system makes this all possible — and it’s more efficient than you might expect if you’re used to the older data-warehouse approach.

2. Encourage Customer Engagement

With more than 200 streaming services crowding the market, many companies are looking for ways to discourage subscribers from platform-hopping. To do this, you need to increase customer engagement.

One option is to experiment with adding more live programming, such as live sports and performances, to your platform. Live programming is a risk because it comes with relatively high licensing fees, but it also tends to have a more engaged audience than video on demand.

Live programming creates a shared viewing experience, leading to higher engagement and social-media buzz that may be missing from more solitary viewing experiences. Take advantage of this volatile situation by improving the viewer experience and using analytics to fail-proof a network that could be overloaded within minutes as streams get shares.

QoE is a major factor in viewer decisions to hop from your service to another. You only get one chance with a live broadcast. Don’t lose your ad revenue because of buffering — use real-time streaming analytics to catch issues fast.

3. Increase Revenue

In 2022, the average person subscribed to 3.55 streaming services per month at a cost of $42.38. By contrast, they paid an average of $79 for cable service. This suggests room for growth in streaming revenue if you can find the right mix of revenue-boosting solutions.

Price Increases

Many streaming businesses increased subscription prices in 2022, and industry experts expect that trend to continue into 2023. However, this is a challenging proposition at a time when you may also be cutting spending on content.

You risk alienating subscribers by creating the perception that you’re charging more for less content. This makes maintaining the quality of content and accurately assessing what viewers want from your service critically important.

Ad-Supported Plans

Many streamers have already introduced ad-supported plans to provide a lower-cost option for price-sensitive customers. However, this model faces a challenging road in 2023 as both consumers and advertisers are likely to cut spending in light of a potential recession in the near future.

Price-conscious consumers may be ready to sign up for ad-supported plans just as advertisers are less willing to pay for advertising. You’ll need to leverage your customer data to create targeted advertising that appeals to both consumers and advertisers, plus provide advertisers with data that supports your sales pitches, such as more accurate viewership metrics.

Cable 2.0

It’s a running joke that frustrated streaming consumers, sick of switching between services and paying for multiple subscriptions, wish they could just get everything all in one place — like they once did with cable.

In 2023, this may become more of a reality as large players consolidate their offerings and smaller ones merge to become more competitive. Frictionless experiences that require a single login and offer simple navigation and billing options may gain traction with many consumers.

4. Prioritize Quality of Experience

The name of the game is no longer just content selection, regardless of where you are in the streaming market. With varied audience interests and a spectrum of both niche and big-name publishers, it’s back to the old audience behavior model of flipping through cable TV channels.

You might not be able to differentiate entirely on content. What you can do, regardless of your programming strategy, is stand out as a provider of consistent, high-quality service:

- Make the most of a complex multi-CDN strategy by ensuring/automating failover and eliminating common single points of failure.

- Use granular, census-derived insights to target metrics with the greatest improvement potential, widest audience impact, and most influence on QoE.

- Respond within minutes to otherwise unidentifiable issues based on insights from complex metrics derived from your real-time stream analysis.

Industry-giant enterprises aside, most companies aren’t making the most of their data streams in terms of QoE optimization. Become outstanding in your operations, and your audience will notice.

5. Focus on What Makes You Unique

The competition in the general entertainment sphere is fierce. The winners of the streaming wars will be the platforms that innovate to find a competitive edge — something they can provide to subscribers that other platforms can’t offer.

Anecdotal evidence is interesting, but it doesn’t give actionable insight. You have to depend on quality, granular data, or you’ll lose your edge — and your audience.

If you’re a big player in the industry with popular franchises, this may mean shifting spending to your most popular original content while cost-cutting by eliminating less popular content. If you’re a smaller company, it may mean assessing whether you have enough unique content to compete and, if not, considering strategic mergers with other platforms.

Boost Your Streaming Profitability With Conviva

The key to increasing your profitability is figuring out what makes your customers tick. Conviva can help you utilize your data to address issues in real time, keep viewers engaged, and improve customer retention. Check out our streaming insights platform or request a demo.