Meet Conviva

AVOD Experience

With subscriber retention and ad revenue at stake, you need a surefire way to understand and improve your audience’s experience to keep them coming back for more.

Do you need hours, sometimes days, to triage video operations issues?

Stop wasting time with expensive queries to data warehouses or complicated dashboards. Conviva Video & Ad Experience provides real-time monitoring and instant analytics on live and historical data, unified in a single workflow. Our platform benchmarks your streaming performance, highlights improvement opportunities, drills-down to root cause, and alerts you to problems you might never have discovered—all before your viewers’ experience is impacted.

With Conviva, we have a much deeper understanding of what, where, when, and how our viewers are streaming. As we continue investing in the evolution of WarnerMedia and unlocking opportunities for growth across our brands, Conviva will remain a strategic partner for us.

ROBERT JONES, VP DATA PLATFORMS AND STRATEGY OPERATIONS, WARNERMEDIA

AVOD Experience

Connect the dots across all your systems, in real-time.

Powered by the Conviva Operational Data Platform, Conviva automates the monitoring and optimization of streaming services across every viewer, device, and platform around the world so you can increase engagement, retention, and revenue.

Solution Benefits

Respond while viewers are still watching.

Unlike other solutions with delays of 40 minutes or more, our platform ingests, normalizes, and populates data at 10-second intervals, enabling you to respond effectively in real time, while viewers are still watching, and solve performance and reliability issues with automated detection, diagnostics, and root cause analysis

Lower operational costs.

View all your streaming data through a single pane of glass, eliminating the need for additional diagnostics tools and resources.

Increase viewer engagement.

Optimize experiences and increase minutes watched based on viewer preferences and behaviors.

Increase ad and subscriber revenue.

Reduce churn and identify opportunities to increase ad fill rate, attract lookalike audiences, encourage viewership, and more.

SOLUTION CAPABILITIES

World-class streaming, delivered.

Conviva offers one platform with every capability you need to take your video streaming operations

to the next level.

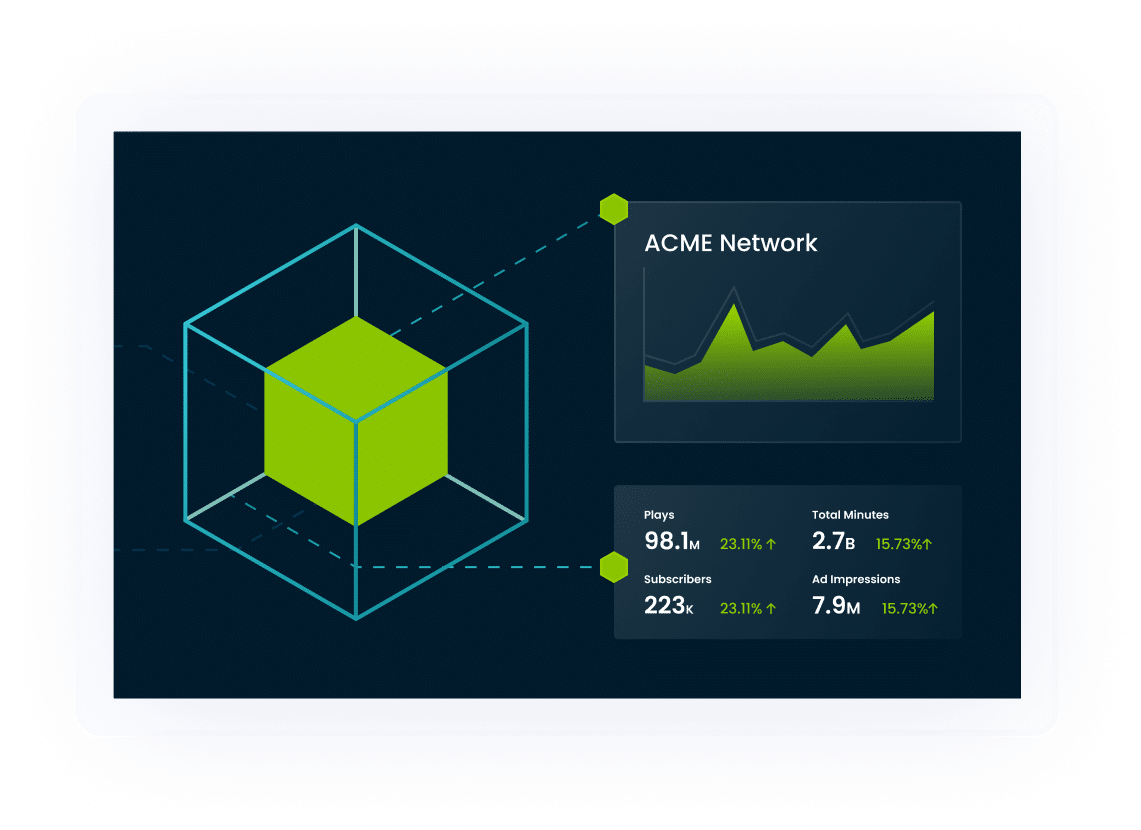

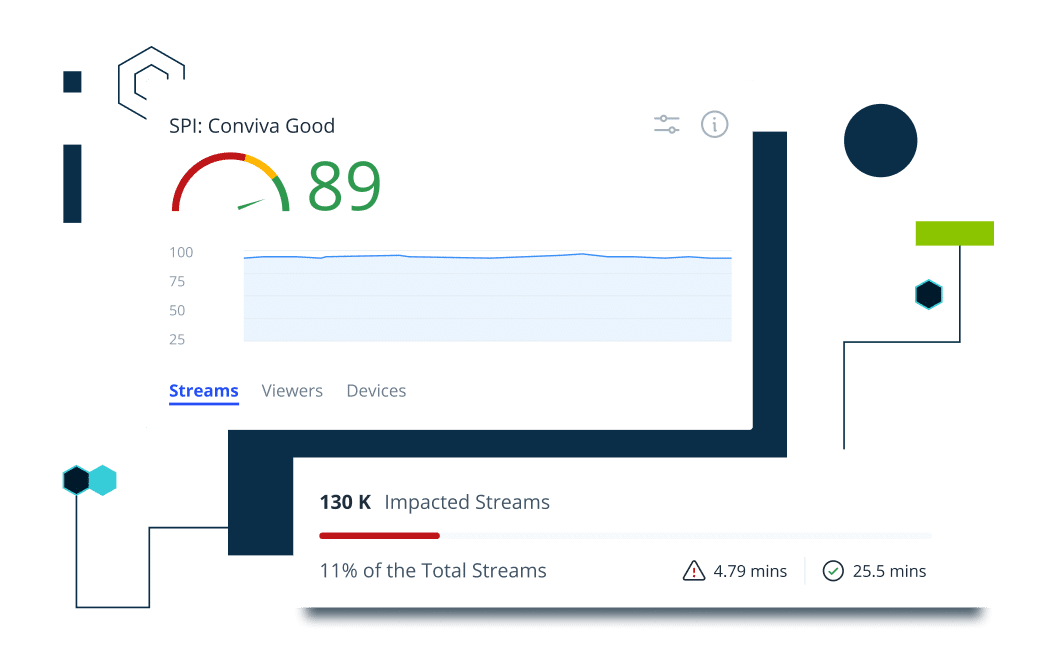

Business-level Streaming Performance Index (SPI)

A single metric that all your teams’ KPIs (key performance indicators) ladder up to, and this number is directly correlated to viewer engagement. Because SPI is directly correlated to engagement, a change in a single point (up or down) can mean a change in millions of dollars of revenue (up or down) to your business. Viewers spend on average 3-5x more time in a video stream with a high SPI score, which translates into more ads watched, more engagement, and reduced customer churn.

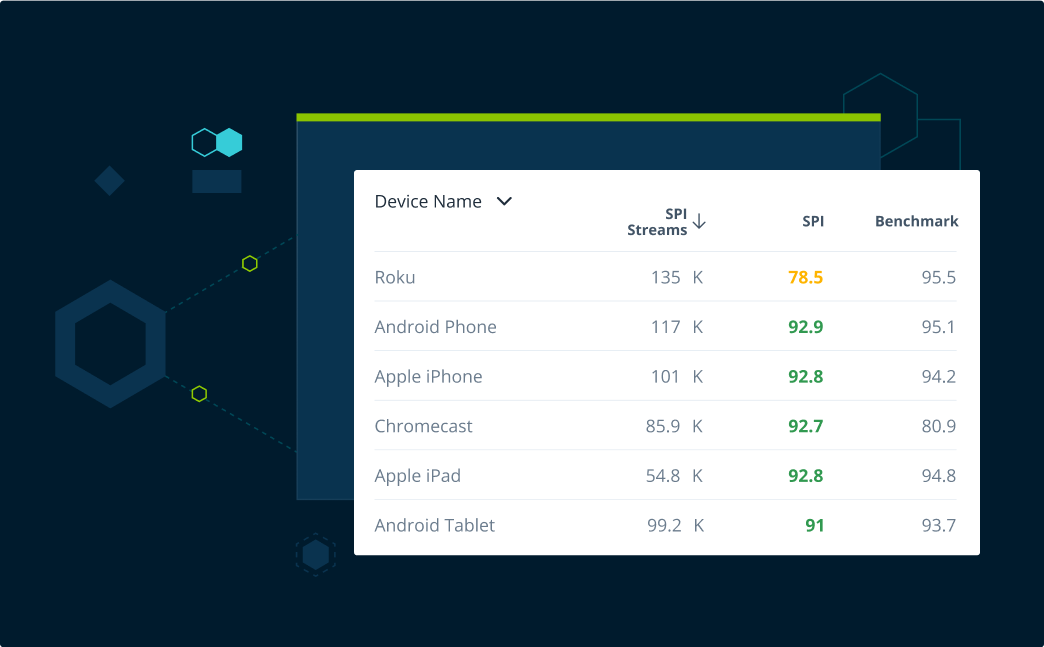

Industry Benchmarks

Benchmarks measure your streaming performance against the industry 95% percentile across more than a dozen different dimensions, including country, device, content category, and more. With Conviva, you can optimize every touchpoint your customers have with your service.

Improvement Opportunities

Conviva automatically highlights how you can improve your service quality and tells you exactly how you can raise your SPI score, in the most efficient way possible, to maximize engineering ROI (return on investment). This translates to increased viewer engagement and higher revenue at the lowest cost to your business.

Infinite Drilldown

Say good-bye to dashboarding. Infinite Drilldown enables you explore multiple dimensions without creating a dashboard or writing a query to triangulate and identify root-cause to a service disruption. Conviva enables you to drill-down to root cause in just a few button clicks using live or historical data without costly data science, warehousing, or compute resources.

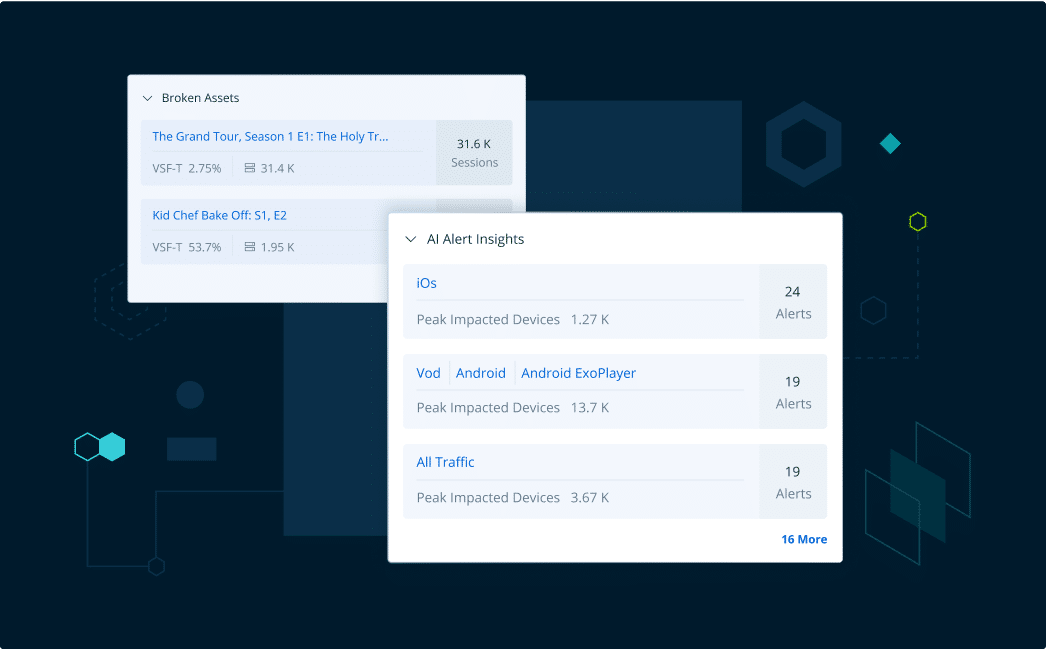

Automatic Insights and AI (Artificial Intelligence) alerts

Automate your monitoring and analytics workflows, freeing up thousands of headcount hours across operations, data science, and support teams. Conviva enables individual operators to become dramatically more productive with a suite of automated capabilities powering their break fix and long-term degradation improvement workflows.

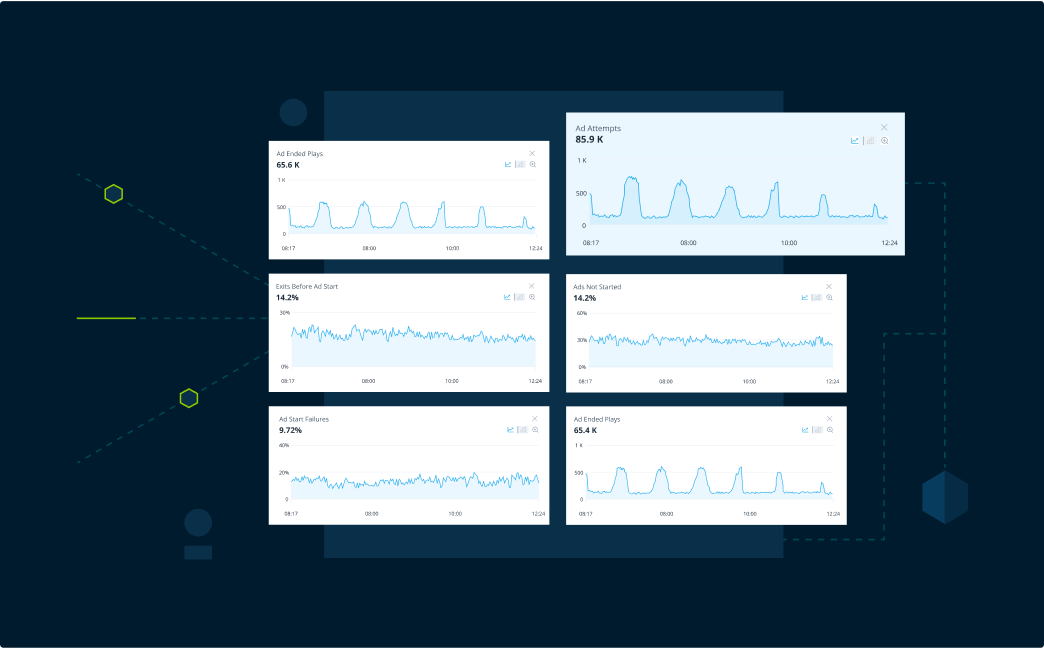

Ad Delivery

Detect and diagnose issues negatively impacting ad delivery and experiences for higher advertising revenue.

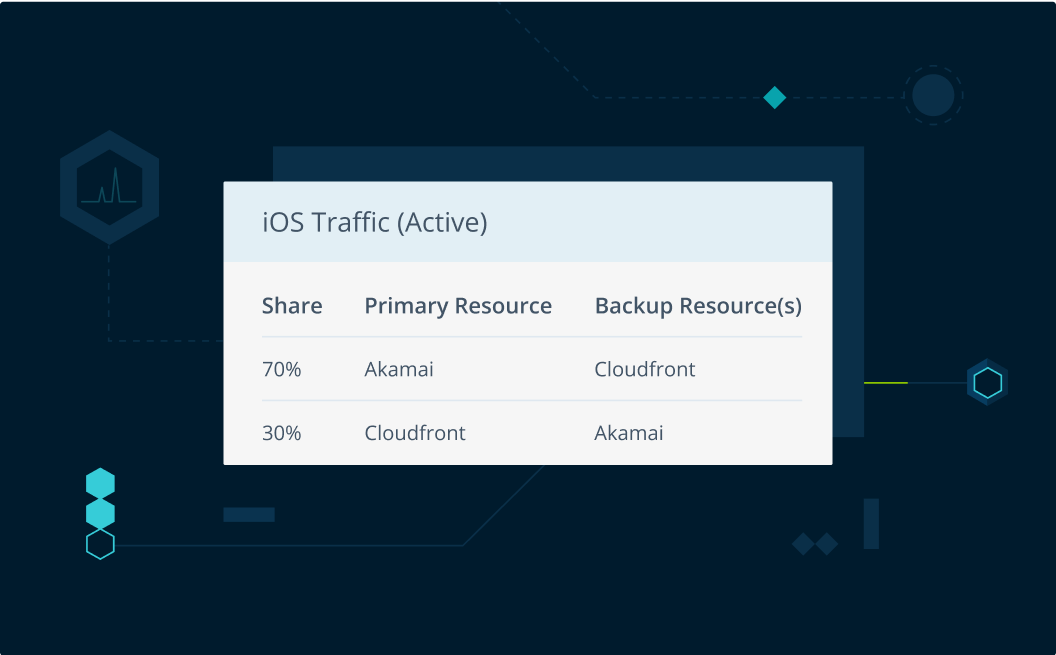

CDN Management

Optimize multi-CDN video delivery on every individual session, improving service resilience, viewer experience, and cost effectiveness of delivery.

API Integrations and Data Feeds

Share and exchange data with any system, anytime. Although we analyze and normalize everything within the Conviva Operational Data Platform, we know that cross-functional teams need to house historical data and integrate with custom systems in many ways. Conviva supports this out of the box with powerful APIs and daily or hourly data feeds.